Ethics & Responsible AI for Business Leaders

Introduction

Artificial Intelligence (AI) is transforming industries, redefining decision-making, and accelerating innovation. However, as AI adoption increases, so do concerns around bias, transparency, privacy, and accountability. For business leaders, ethics and responsible AI are no longer optional considerations — they are strategic imperatives.

Organizations that fail to address ethical AI risks may face reputational damage, regulatory penalties, and loss of customer trust. In contrast, companies that prioritize responsible AI build long-term credibility and sustainable competitive advantage.

This article explores why ethics in AI matters, key principles of responsible AI, and how leaders can implement governance frameworks effectively.

Why Ethics in AI Matters for Business Leaders

AI systems influence hiring decisions, credit approvals, pricing strategies, customer interactions, and even healthcare diagnostics. When AI models are poorly governed, they can:

- Reinforce bias and discrimination

- Violate data privacy regulations

- Produce non-transparent or explainability issues

- Create unintended harmful outcomes

Business leaders must ensure AI aligns with company values, regulatory requirements, and societal expectations.

Responsible AI is not just about compliance — it is about trust.

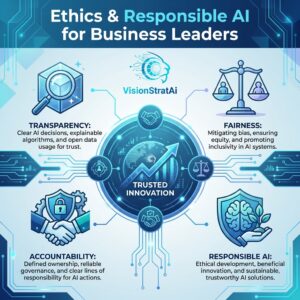

Core Principles of Responsible AI

To build ethical AI systems, organizations should focus on the following principles:

1. Transparency & Explainability

AI systems should provide clear explanations for decisions. Leaders must ensure stakeholders understand how AI models influence outcomes.

Transparent AI increases trust among customers, employees, and regulators.

2. Fairness & Bias Mitigation

AI models must be tested to prevent discrimination based on gender, ethnicity, age, or other sensitive factors.

Regular audits and diverse datasets help reduce algorithmic bias.

3. Data Privacy & Security

Responsible AI requires strong data governance policies, including:

- Secure data storage

- Compliance with data protection laws

- Clear consent mechanisms

Protecting customer data strengthens brand reputation.

4. Accountability & Governance

Organizations should define clear accountability structures for AI decisions. Leaders must establish:

- AI ethics committees

- Risk assessment frameworks

- Clear ownership of AI initiatives

Governance ensures AI systems operate within defined ethical boundaries.

5. Human Oversight

AI should augment human decision-making, not replace it entirely. Critical decisions must include human review to prevent unintended consequences.

Human-in-the-loop models increase safety and reliability.

Real-World Ethical AI Challenges

Across industries, companies have faced challenges such as:

- Biased hiring algorithms

- Discriminatory credit scoring systems

- Lack of transparency in automated decisions

- Data misuse scandals

These cases highlight the importance of proactive AI governance rather than reactive crisis management.

How Business Leaders Can Implement Responsible AI

Develop an AI Ethics Framework

Create internal guidelines aligned with company values and industry regulations.

Conduct AI Risk Assessments

Evaluate potential risks before deploying AI systems.

Invest in AI Training

Leaders and teams should understand ethical AI principles, regulatory requirements, and risk mitigation strategies.

Training ensures responsible AI is embedded into corporate culture.

Monitor & Audit AI Systems

Regular audits and performance evaluations help detect bias and unintended outcomes.

Continuous monitoring is key to long-term responsibility.

Business Benefits of Responsible AI

Ethical AI is not just about avoiding risk — it delivers measurable business value:

- Stronger customer trust

- Improved brand reputation

- Regulatory compliance

- Reduced legal exposure

- Sustainable long-term growth

Responsible AI strengthens competitive positioning in increasingly regulated markets.

Conclusion

Ethics and responsible AI are critical for business leaders navigating digital transformation. As AI systems become more integrated into strategic decision-making, organizations must prioritize transparency, fairness, accountability, and governance.

Companies that proactively implement responsible AI frameworks will not only mitigate risk but also build trust and resilience in a rapidly evolving digital economy.

Responsible AI is the foundation of sustainable innovation.